No, Artificial Intelligence Won't Take Over the World

From François Chollet’s The impossibility of intelligence explosion:

“And what is the end result of this recursively self-improving process? Can you do 2x more with your the software on your computer than you could last year? Will you be able to do 2x more next year? Arguably, the usefulness of software has been improving at a measurably linear pace, while we have invested exponential efforts into producing it. The number of software developers has been booming exponentially for decades, and the number of transistors on which we are running our software has been exploding as well, following Moore’s law. Yet, our computers are only incrementally more useful to us than they were in 2012, or 2002, or 1992.”

Who is this François guy? An AI researcher at Google who created one of the most popular Deep Learning frameworks.

In the context of digital, it is fascinating to realize that digital hasn’t changed how we live our lives. We buy/rent a house or apartment. We drive our cars or ride the train to work. We push digital paper around at work. Netflix made TV a better experience, but it is still watching TV. Airbnb made it easier to book an exciting home somewhere, but it hasn’t changed travel. The channels and tools have changed, lessened the friction we have to deal with, maybe made us more productive, but at a fundamental level, our activities have not changed.

And that’s ok. The problem is that our expectations are out of whack: we think every new piece of technology is life-changing. All information gets broadcasted at hyper-speed and hyper-volume now. We have no practical way to filter and process. We end up thinking and treating everything equally important all time.

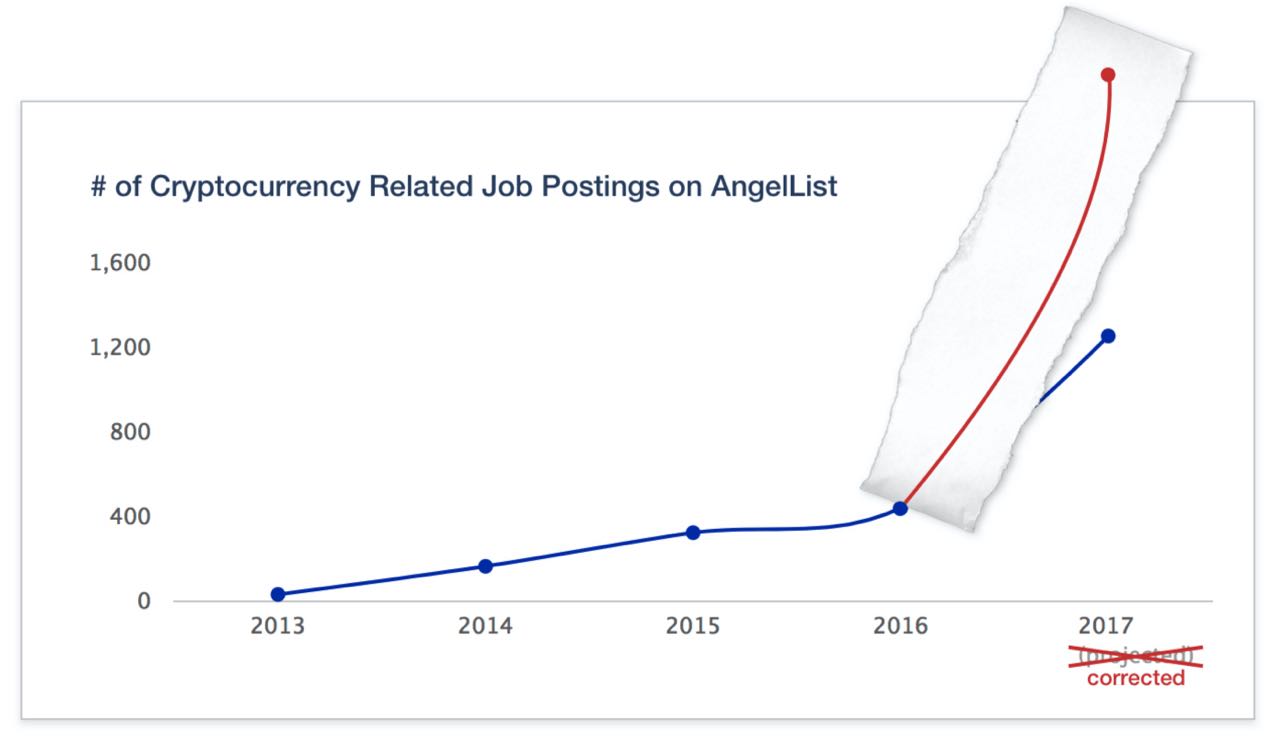

Of course, we can see this in its full glory with bitcoin and blockchain technology today. I ran into this visual earlier in the week:

From an AngelList newsletter:

“Key takeaway: Blockchains are the biggest technological breakthrough since the Internet.”

Now, I have nothing against blockchain and bitcoin. There are extremely promising uses for it, but every time I see a ‘once in a generation’ type statement, I’m reminded of Roy Amara’s quote:

“We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.”

Very few companies have gotten big data right. Most current applications of AI, more specifically supervised learning, requires large amounts of clean data. So that is going to be a challenge. And instead of figuring out all that, we will be moving on to the blockchain. Funny. Or not.

Read about the type of discussion McKinsey is having with clients about digital and marketing. Is your data in silos? Do you have to re-orient employee mindsets to put customers at the center?

It is almost 2018. Why are we still having these types of discussions?

Back to AI. Like most executions of digital which have focused on process, efficiency, and cost, instead of being transformational, AI might be the same. Check out Andreessen Horowitz’s State of AI video. Lots of examples of reducing friction and doing existing activities better and faster, but nothing new.

Yet.

It is not to say that old economy jobs won’t be destroyed and supplanted with new ones. Or existing companies will not get wiped out and new ones created. As an example, listen to episode 27 of Rad Awakenings podcast where they discuss a new company that arbitrages interest rates, other macroeconomic information, and payment terms to elicit discounts from vendors. This is possible now that we have the computational power and ability to massively aggregate amounts of data.

AI is not earth shattering as harnessing electricity or practicing agriculture for the first time. We expected flying cars by now. Instead, we got electric cars.